The term “artificial intelligence” (or “AI”) is en vogue and is often incorrectly used to refer to things that do not technically qualify as AI. Processors can add apparent “intelligence” to machines by enabling them to turn thermostats on and off at certain times and make well-intended recommendations on which pants go with which shirt, but these are just programs that run through amounts of data and derive associations. Predictive analytics can apply these associations to future functions, appearing to act like AI. Here, we focus more narrowly on AI in the context of using computers to mimic (or exceed) human intelligence.

There are three main calibers, or levels, of AI. The first caliber is called artificial narrow intelligence (ANI), which refers to using computer power to excel in one very specific area, such as playing chess, optimizing hybrid vehicle efficiency or making product recommendations. Practically every type of intelligent system we use today fits into this category.

Artificial general intelligence (AGI) is the second caliber, and it refers to computers that are roughly as intelligent as humans and that can perform practically any intellectual task we can. Computers at this level need to be able to reason, plan into the future, understand complex concepts and learn, including from experience. Ray Kurzweil, an American author, computer scientist, inventor and futurist, has estimated that computers would have to process 10 quadrillion (or 1016) calculations per second to equal the power of the human brain. China’s Tianhe-2 supercomputer is currently the world’s fastest, capable of operating at 33.8 petaflops (or quadrillion floating-point operations per second), and it appears to have sufficient speed for AGI.

The third caliber, artificial superintelligence (ASI), refers to supercomputers whose intelligence exceeds that of humans in just about every area, including creativity, wisdom and social intelligence. This category is the focus of science-fiction writers who imagine horrific scenarios in which the machines come to realize that humans are the problem and decide to eradicate all of humanity.

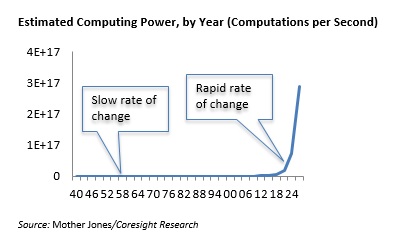

Today, it appears that we are stuck at the ANI level and that the second and third levels of AI are still incredibly far off. What is different for those of us currently alive is that we have always lived in times of continuing technology improvements, the rate of which continues to accelerate. Think of the slow rate of technology improvement during the first half of the 20th century compared with the second half, which saw milestones such as the invention and improvement of the transistor in the late 1940s and early 1950s, the launch of the IBM PC in 1981 and the launch of the smartphone about 10 years ago. One driver of technological change is Moore’s law, named for Intel cofounder Gordon Moore’s observation that the number of transistors that could be incorporated in a silicon wafer (which drives computing power) appeared to double every 18 months (the rate was subsequently modified to every 24 months). Computing power is following this curve and will soon push computation speeds to higher and higher numbers of petaflops.

The figure below shows the current available computing power, by year (which follows Moore’s law). We are living in the time depicted on the right side, where growth is increasing exponentially.

We are likely to continue to see an accelerating rate of technological change, fueled by ever-increasing computing power that is (hopefully) propelling us toward a future in which intelligent machines can help us solve problems, cure diseases and generally benefit society.

Other pieces you may find interesting include: Artificial Intelligence in Drugstore Retail, Deep Dive: Artificial Intelligence in Retail—Offering Data-Driven Personalization and Customer Service, Waymo Set to Make the First Move in Autonomous Ride-Hailing

@DebWeinswig

@FungRetailTech

Facebook

LinkedIn

Subscribe to our YouTube channel

Pinterest

Instagram